Decoding what artificial intelligence means for healthcare

By MaRS Staff | March 3, 2026

With the launch of Anthropic’s Claude for Healthcare in the United States, experts assess how AI could help — or hinder — Canada’s healthcare system.

Canada’s healthcare system is chronically understaffed: Six million Canadians are without a regular healthcare provider. A recent Health Canada study concluded that the country’s medical schools cannot train enough doctors to ever close the gap (to meet demand, we’d need another 22,000 physicians). And of the doctors we have, nearly half are feeling burned out.

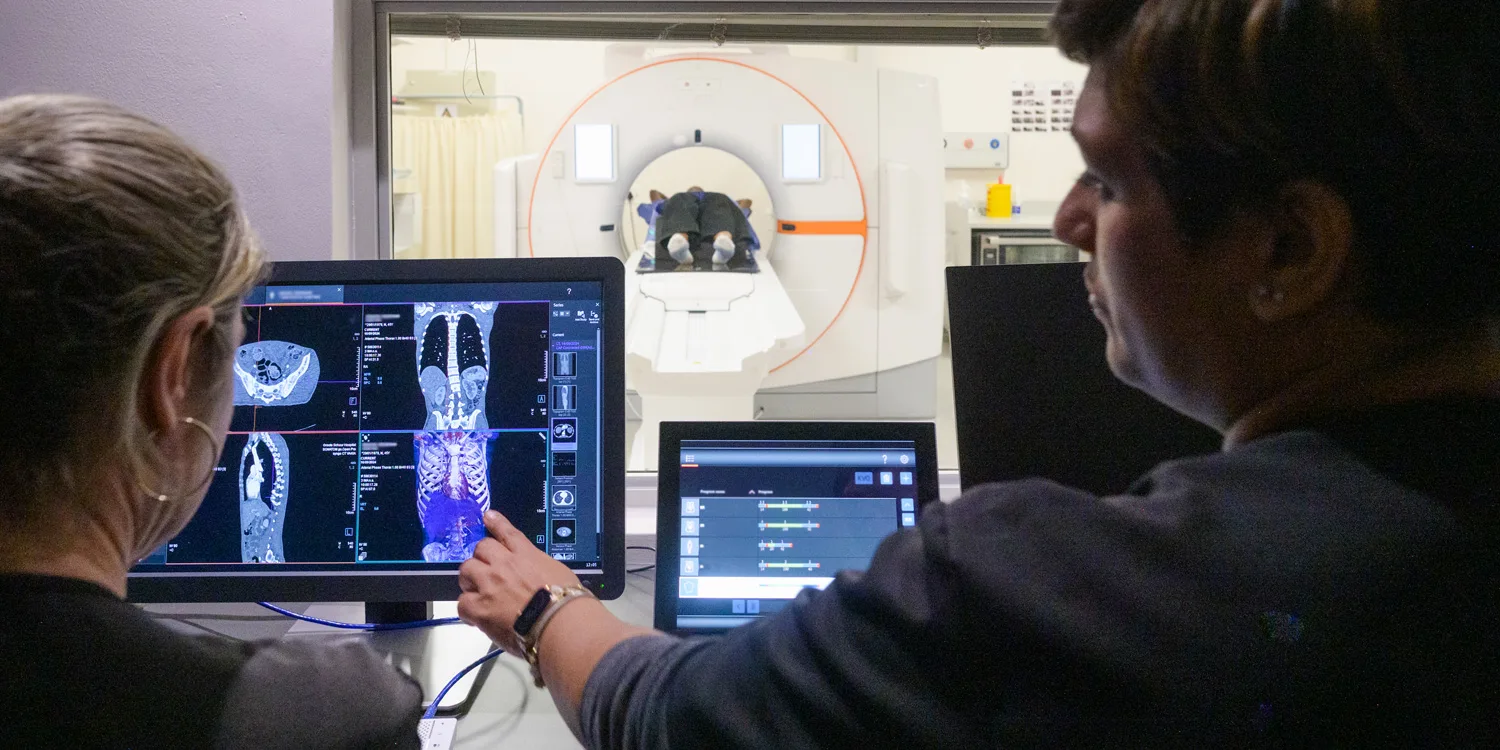

Artificial intelligence is an obvious solution to our lack of people power. It is already at work behind the scenes in hospitals, reviewing medical images and monitoring patients’ charts for signs of deterioration. Nearly 60 percent of doctors who use AI say it helps them speed through administrative tasks.

But AI may soon play a larger role in our healthcare system. Recently, the companies behind ChatGPT and Claude unveiled new health-related features for U.S. users. (These tools are currently not available in Canada.) Both platforms can analyze users’ medical records, pull research from online databases and explain test results, among other functions. Claude can also help healthcare providers communicate with patients and insurance companies.

According to OpenAI, 230 million people ask ChatGPT for health information each week. Online threads are full of people recounting how AI helped diagnose a rare disease or make sense of unusual lab results. Patients with long-term conditions are also using it for advice on managing their symptoms and medications.

However, AI is still prone to hallucinations — responding to users with information that is inaccurate or, in some cases, wholly fabricated — and questions remain about whether the technology can be trusted. A new study from Germany found Google’s AI overviews are more likely to cite YouTube than any medical site for health queries.

Anthropic and OpenAI say they comply with healthcare privacy laws and rule out using chats on their health platforms to train their models. But experts have pointed out that the chatbot users have fewer legal safeguards than patients in a doctor’s office, where there are robust privacy rules and a duty-of-care expectation. In addition, AI companies often put disclaimers in the fine print that disavow the use of their tools for diagnosis or health advice, pushing responsibility for evaluating the validity of the information to the patient.

So, could prescribing this technology be a way to heal the infirmities of Canada’s medical system? Or will Dr. Robot prove to be a quack? We asked three experts for their views on the rise of AI in healthcare. They all expressed cautious optimism.

“These chatbots tend to be significantly more empathetic than the average clinician”

— Muhammad Mamdani, clinical lead in artificial intelligence, Ontario Health, director of the University of Toronto’s Temerty Centre for Artificial Intelligence Research and Education in Medicine. Mamdani has been involved in the development of more than 50 AI tools used in hospitals.

The pros: “It has the potential to be incredibly exciting, if it’s done well. Currently, the health system is fairly broken. Fifteen to 20 percent of people who go to the emergency department shouldn’t be there. We have long wait times and the decisions we make around certain treatments are poor. AI has the potential to say, ‘The emergency department is really crowded, and based on what you’ve told me, it sounds like you have a UTI. You may want to consider going to a pharmacy.’

“AI also makes available all sorts of health information that previously was only meant for clinicians. A doctor usually has seven to 13 minutes with you, and doesn’t know how you slept last night, and may not be able to understand all the patterns because they weren’t there. But now you’re wearing this watch that tracks a bunch of stuff. You can feed the data into an AI and have a conversation with it for hours. And several studies have shown that these chatbots tend to be significantly more empathetic than the average clinician.”

The caveats: “I’m a little concerned about making sure these solutions have been vetted before making them publicly available. While there’s potential to really do good for people, for clinicians and for the healthcare system, there’s also potential to make things worse. AI is linked to the web and the best available evidence and guidelines, which can be so powerful. But it doesn’t have the experience that a clinician does. It can lie, it can make up stuff and confabulate or hallucinate. Studies have looked at ChatGPT for health, and the accuracy of the information can range from 20 percent to 95 percent. The 20 per cent worries me.”

“It could allow a patient to be more direct in the treatment they’re seeking”

Lori Casselman, CEO and founder of June Health, a virtual care platform for women’s health.

The pros: “Data and information can empower a patient to advocate for themselves. The more education and understanding you have, the more informed a conversation you can have. In cases where a practitioner doesn’t have a particular specialization, it could allow a patient to be more direct in the treatment options or referrals they’re seeking. But being able to access that data in isolation is not what we’re striving toward. Putting reliable data in a patient’s hands can be very valuable, but [it should be] in partnership with care providers and clinicians who can support a holistic treatment plan.”

The caveats: “Historically, women’s health has been under-researched, under-diagnosed and often dismissed. Leveraging AI systems that have been trained on existing healthcare data could unintentionally reinforce these gaps. AI can absolutely improve women’s care — if it’s designed and ethically trained with women’s health, symptoms and care realities in mind. So, it’s important that we have broad and inclusive data sets for model training.”

“AI will not replace a physician — nor do I think it should”

Dr. Margot Burnell, president of the Canadian Medical Association, which has advocated for greater use of AI — in tandem with thorough regulatory oversight — to relieve the administrative burden on physicians while safeguarding privacy.

The pros: “AI will be important in synthesizing the rapidly expanding body of knowledge in the medical field. Personalized medicine and therapeutic development are taking off, and having a reputable site where that information is accurately distilled and presented will be a valuable tool for physicians and care teams.

“AI will not replace a physician — nor do I think it should. Part of being a physician is developing that trusting relationship. It’s presenting options to patients, understanding their goals for care, giving them enough information to make the decision that’s best for them.

“Patients will come to physicians’ offices with information that has been generated through AI. So, patients and citizens will need to be not only health literate, but also AI literate. If we can educate the public to access safe and appropriate information, and that enables them to understand and be more involved in their care, I think that’s a great attribute.”

The caveats: “AI features that decrease administrative burden, improve patient flow or transcribe notes are lower risk. But as you move toward higher risk, with managing side effects and treatment algorithms, then there needs to be caution. Physicians using AI need to know what the training set was — is it representative of the patient that is sitting in front of them? Has it respected confidentiality, digital sovereignty? Who owns the data, who can access the data?

“It’s like drug development: You need the pharmaceutical industry to provide the drug and scale up manufacturing. But you need a regulatory authority to ensure that the drug and all its development have been seen by an independent body, that it’s safe, that its side-effect profile risk has been clearly articulated. Having oversight and regulations and knowing where the data is and who has access to it are critically important.”

Interviews were condensed and edited for clarity.

MaRS helps founders build their companies and create global impact. Learn how you can join our community of innovators benefiting from the MaRS advantage.

Main image source: iStock

MaRS Staff

MaRS Staff